A block is the smallest unit that may be copied to or from memory. (5) Caches are loaded in terms of blocks. Blocks contain some number of words, depending on their size.

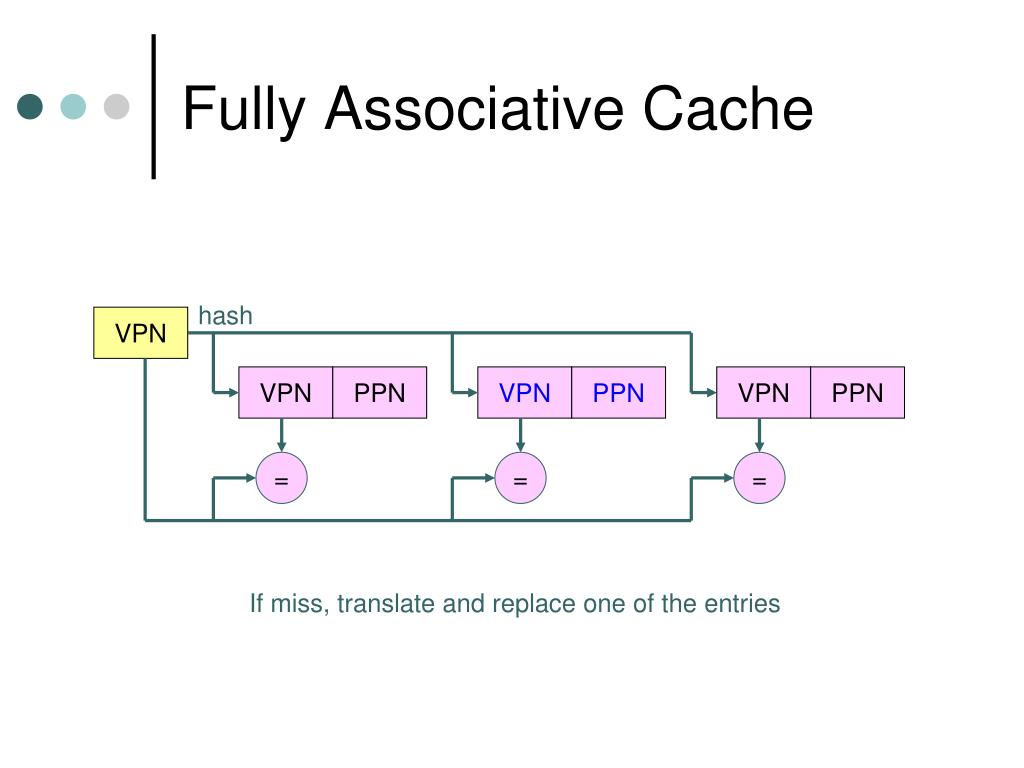

Caches usually contain a power of 2 # of blocks but this isn't a requirement. Except as noted, the size of all caches is an integer multiple of the block size. (4) All caches are broken into blocks of fixed size. (This is relevant to comparisons in H&P of the 200MHz 21064 Alpha AXP to the IBM 70MHz POWER2. It isn't just a matter of cranking the clockspeed up. (3) Measures such as "work per cycle" or "instructions per clock" are meaningless metrics for comparing different architectures. There's a pretty damn good reason, since modern CPUs almost exclusively use set-associative and direct mapped caches. For instance, one might ask why direct and set-associative caches (see below) would even need to exist when fully associative caches are so much more flexible. If you could, somebody would already have done it and you wouldn't be debating it. (2) You usually can't improve anything in computers without giving up a little of something else. This is accomplished by copying the contents into the faster cache. Caches work by mapping blocks of slow main RAM into fast cache RAM.

Square bitmaps are stored one scanline after another, rectangular arrays of numbers are stored in memory as a series of linearly appended rows, and so on. All memory is addressed, stored, accessed, written to, copied, moved, and so on in a linear way. What's different about a fully associative cache? What are some key points I need to understand this section?ĥ.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed